|

Moving Towards Terabit/sec Scientific Dataset Transfers: the Large Hadron Collider (LHC) Challenge

About Bandwidth Challenge

In partnership with California Institute of Technology (Caltech), the University of Florida and other universities and laboratories across the world, a team from Center for Internet Augmented Research and Assessment (CIARA) and Florida International University's (FIU) Physics department, has won the Super Computing bandwidth challenge for the year 2009.

The SuperComputing Conference 2009 was held between November 14 – 20, 2009 at Portland, Oregon. An international team of physicists, computer scientists, and network engineers led by the Caltech demonstrated storage-to-storage physics dataset transfers of up to 100 Gbps (gigabits per second) sustained in one direction, and well above 100 Gbps in total moving bidirectional, using a total of 15-10 Gbps drops at the Caltech booth.

The FIU team joined the contest in part to help FIU's new 10 Gigabit per second network infrastructure and to learn how and/or if data transfers at multi-Gbps could be achieved from the new campus network. The local computing hardware’s used are part of FIU's Tier3 center which is normally used in the analysis and processing of Compact Muon Solenoid (CMS) data. The winning entry, entitled "Moving Towards Terabit/sec Scientific Dataset Transfers: the LHC Challenge" demonstrated a sustained aggregate rate of 115 Gigabits per second over the hour long contest. The FIU Tier3 center's contribution alone amounted to a disk to disk outbound rate of about 6.3 Gbps.

Following the Bandwidth Challenge, the team continued its tests and established a world-record data transfer between the Northern and Southern hemispheres, sustaining 8.26 Gbps in each direction on a 10 Gbps link connecting Sao Paulo and Miami.

Network Diagrams

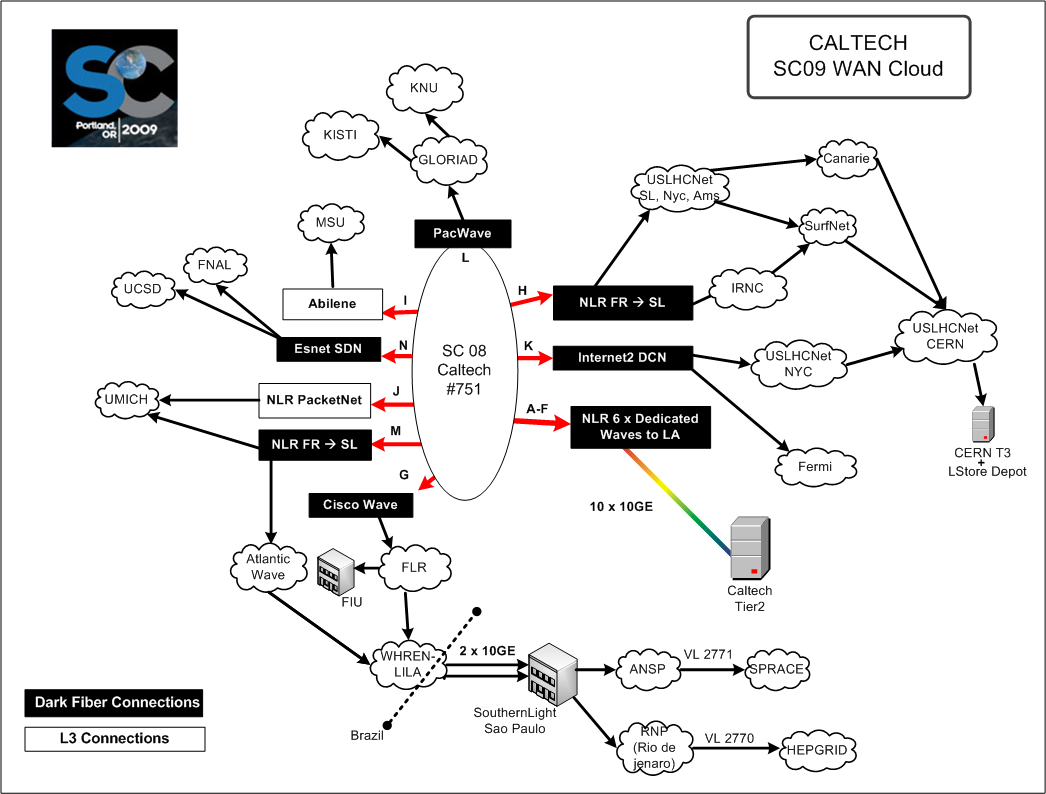

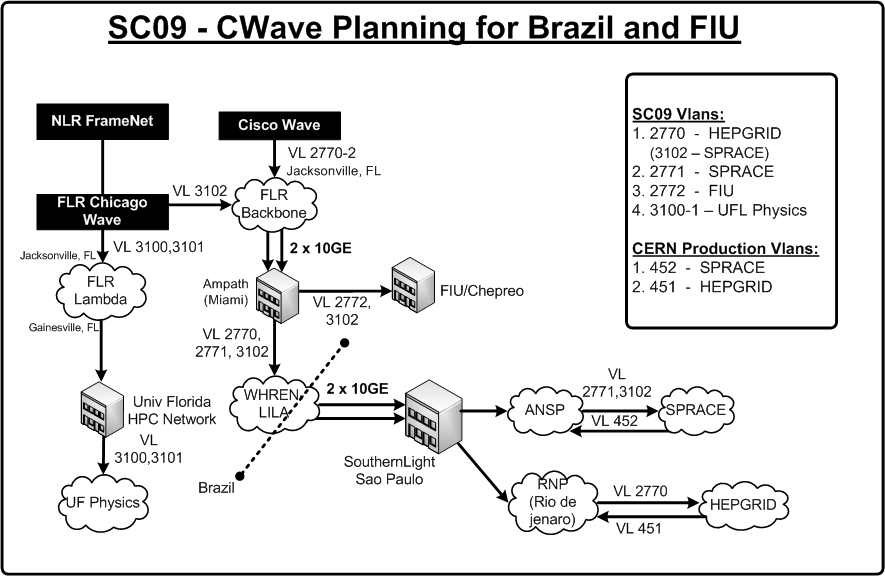

The team has been led by Caltech, including people and resources from FIU, University of Florida, University of Michigan, FermiLab, CERN and other collaborators in Brazil and Europe. Each site was connected via one or more dedicated private networks totaling 15 separate 10 Gbps links. One of these links, the Cisco Wave(CWave), was connected to FIU's new 10 GigE campus backbone through Ampath and the Florida Lambda Rail.

Caltech SC09 WAN Cloud

SC09 - CWave Planning for FIU and Brazil

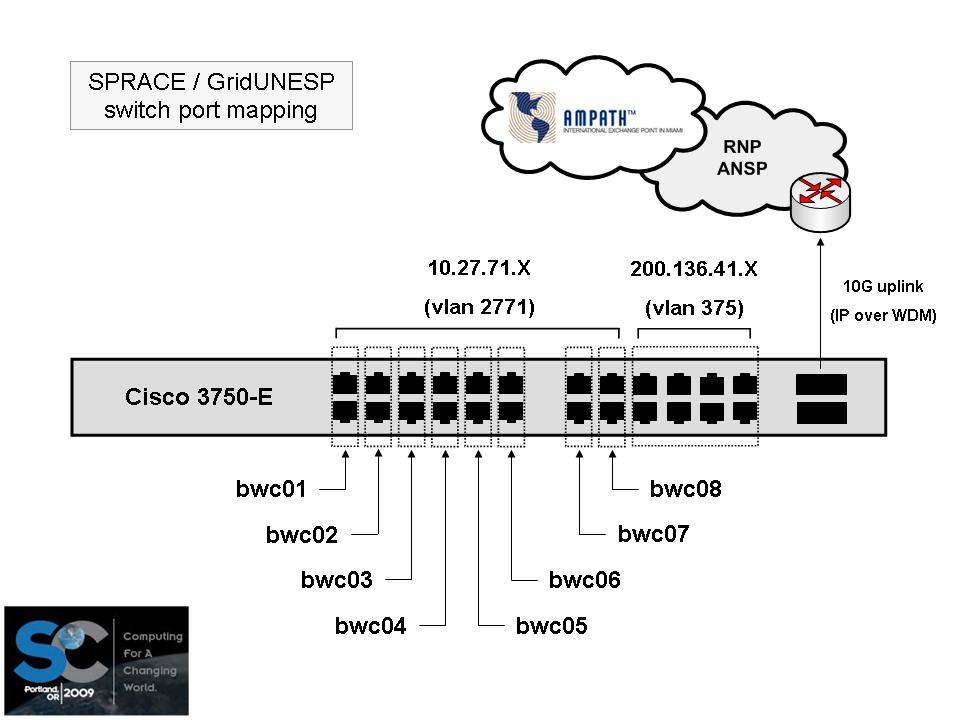

IPs for CWave

Result Graphs

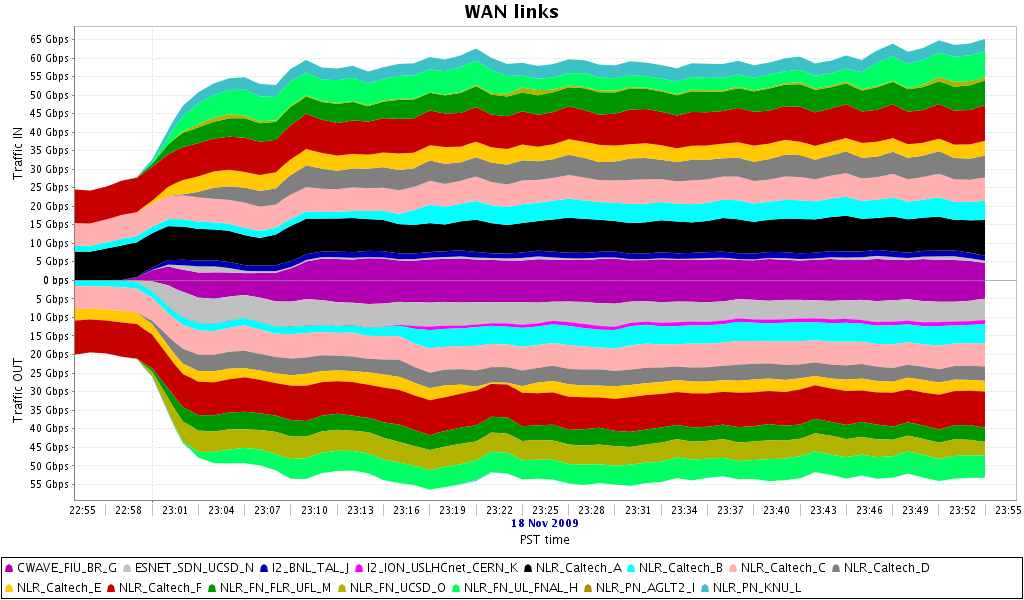

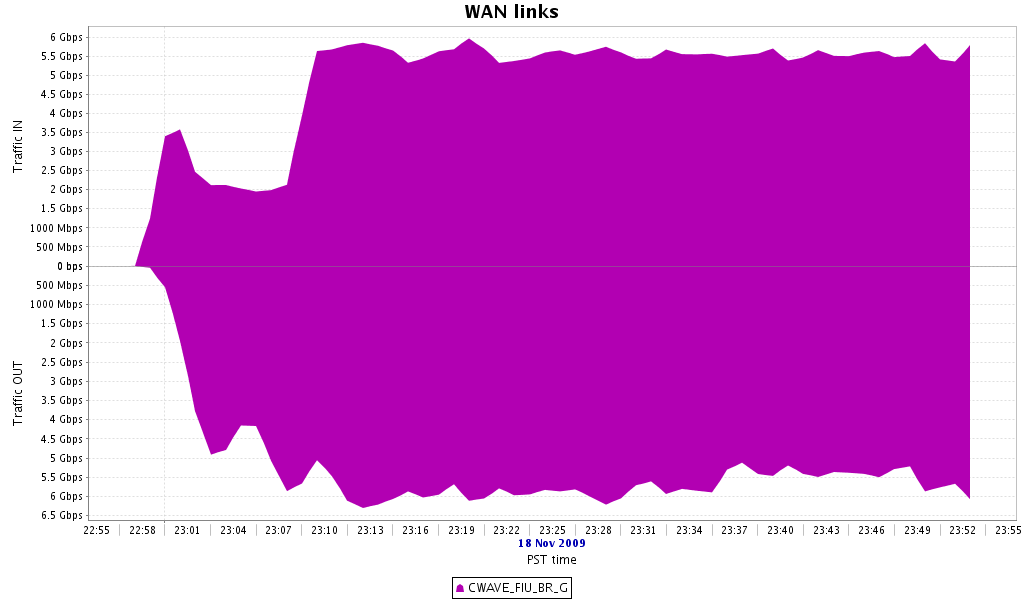

WAN Links 1 (22:55 - 23:55 PST)

CWave Link2 between FIU and Brazil (22:55 - 23:55 PST)

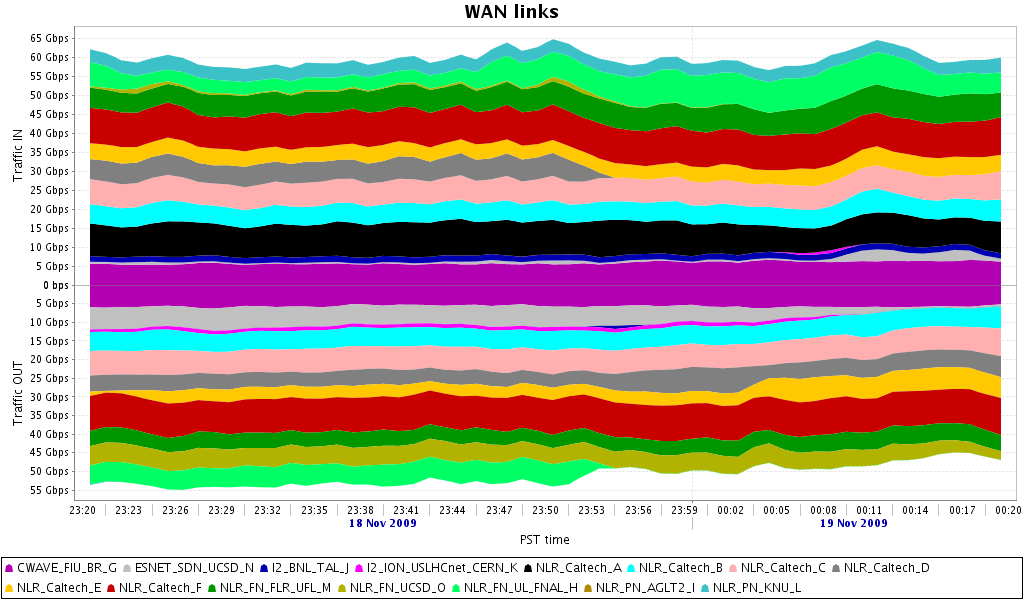

WAN Links 2 (23:20 - 00:20 PST)

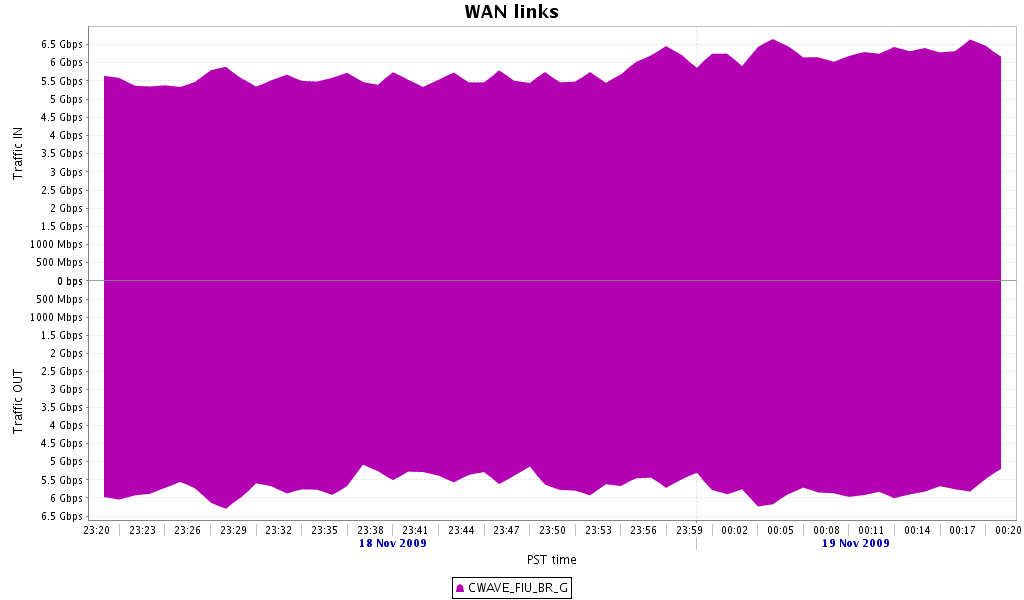

CWave Link2 between FIU and Brazil (23:20 - 00:20 PST)

The two graphs (WAN Links 1 an WAN Links 2) shows the aggregate bandwidth utilized by the team during the challenge. The vertical axis is in Gbps and the horizontal axis represents wall time. Each band corresponds to the transfer rate for a particular network link. FIU's contribution corresponds to the pink/purpleish band at the very center between 0 and 7 Gbps. The CWave Links 1 and 2 shows the aggregate bandwidth used between FIU and Brazil.

Quotes

Luis Fernandez Lopez, CIARA Research Scientist, said "It is the first time that we carried out our demonstration without any network failure or interference from the network. I am glad that we were able to maintain 6 Gbps (approx.) during our demonstration which also made us the second largest high energy physics contributors."

Justin Kraft, a participant from FIU told "Winning the Bandwidth Challenge was definitely a great outcome. The greatest part of the event was getting the chance to work with such a fantastic skilled group of people from all over the world. I have never worked on a team as well qualified and enthusiastic as this one."

|